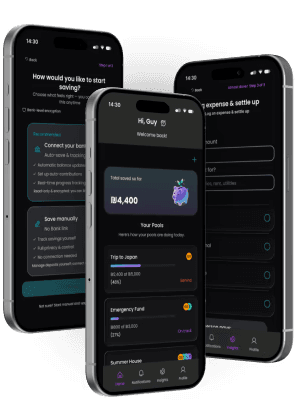

Pool | Collaborative & Personal Savings

AI-powered civic platform simplifying eligibility, document workflows, and public service processes.

Problem Context

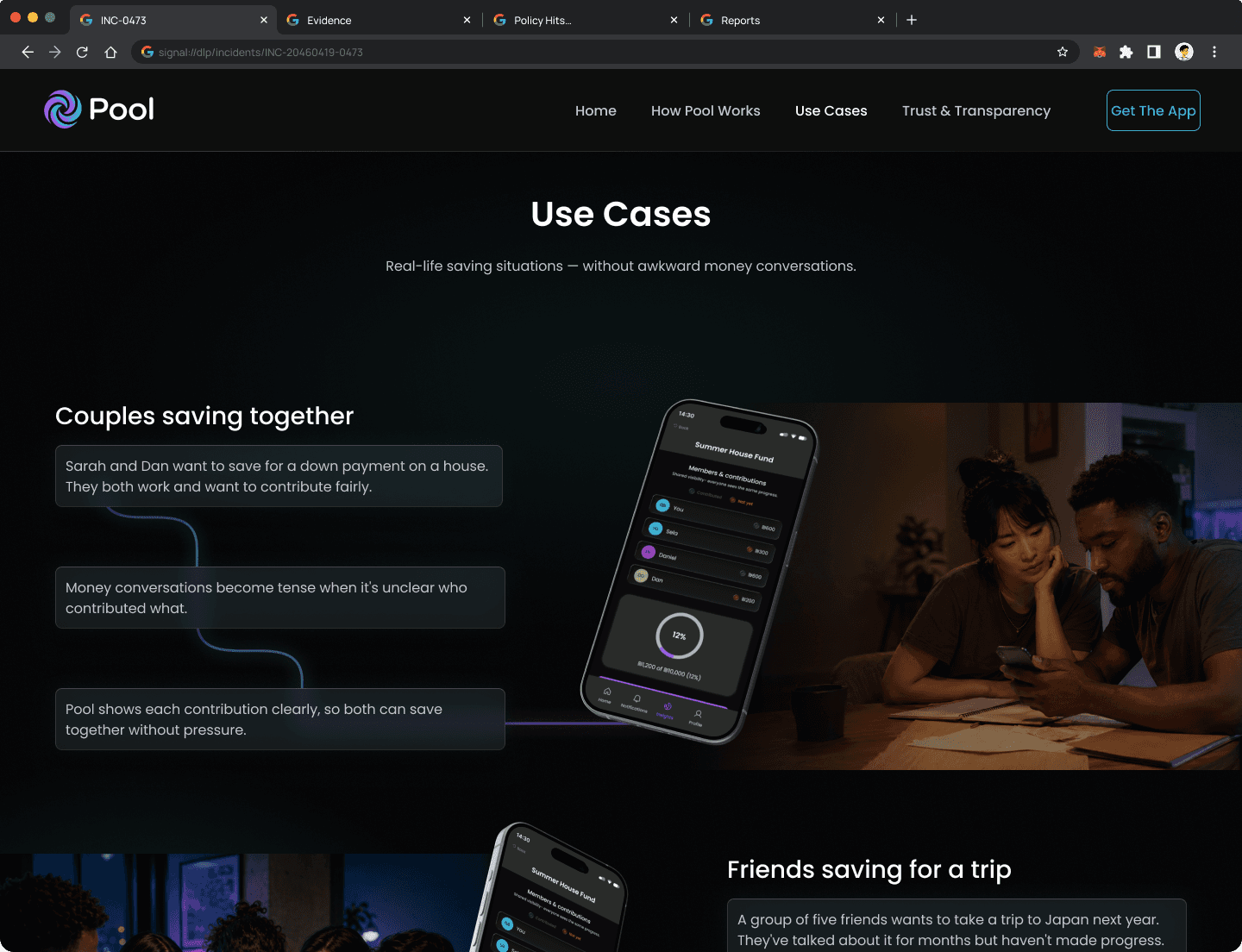

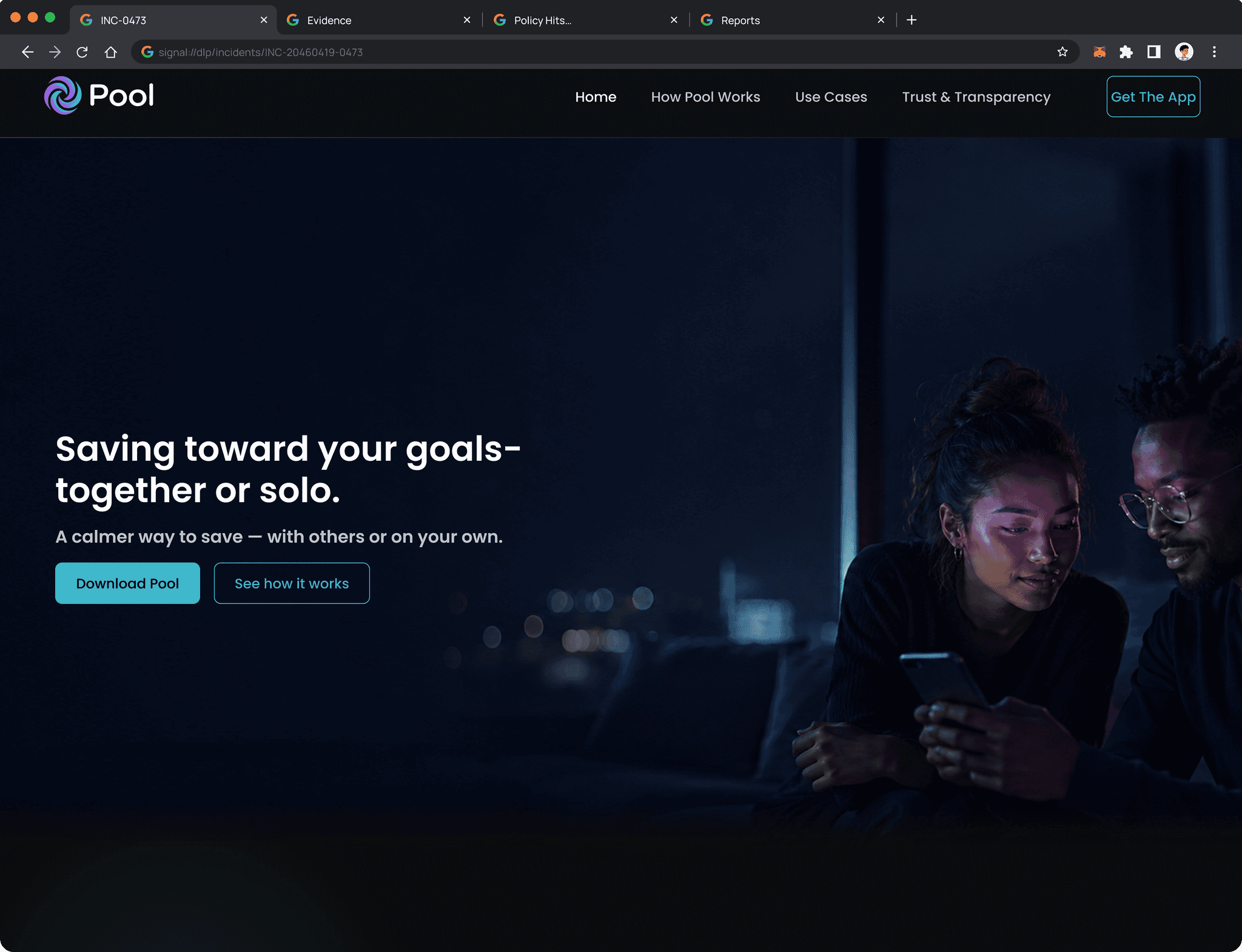

Saving toward a shared goal breaks down fast. Without one place to track who contributed what, groups fall back on messages and mental math. Pool gives everyone a shared view that updates automatically, no check-ins needed.

Designed in dialogue with Figma Make

Key screens and component variants were explored using Figma Make as an active design partner. Generating layout alternatives, testing edge cases, and stress-testing the contribution flow before committing to final decisions.

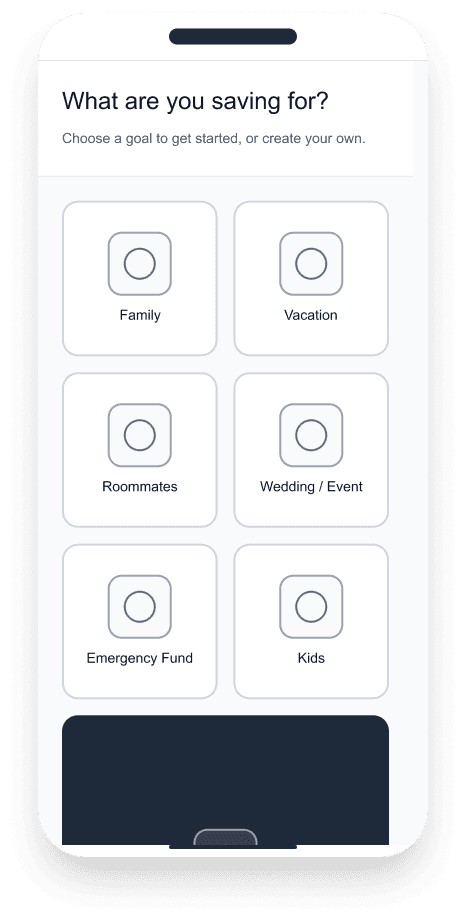

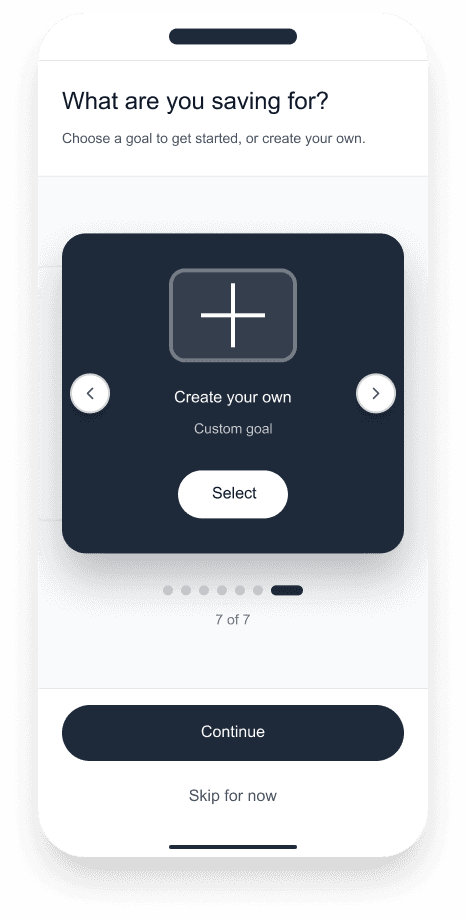

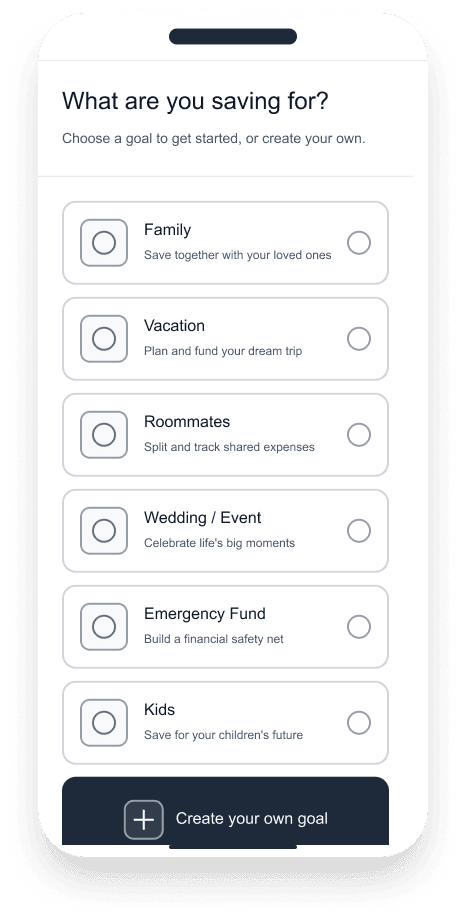

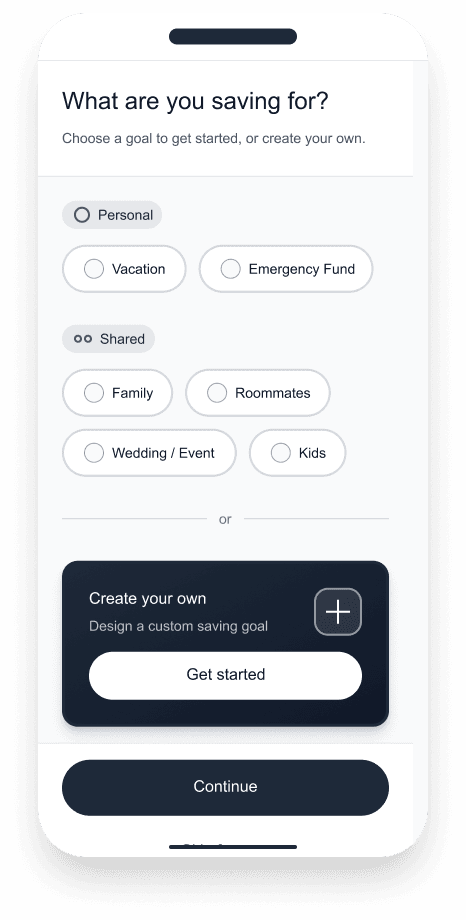

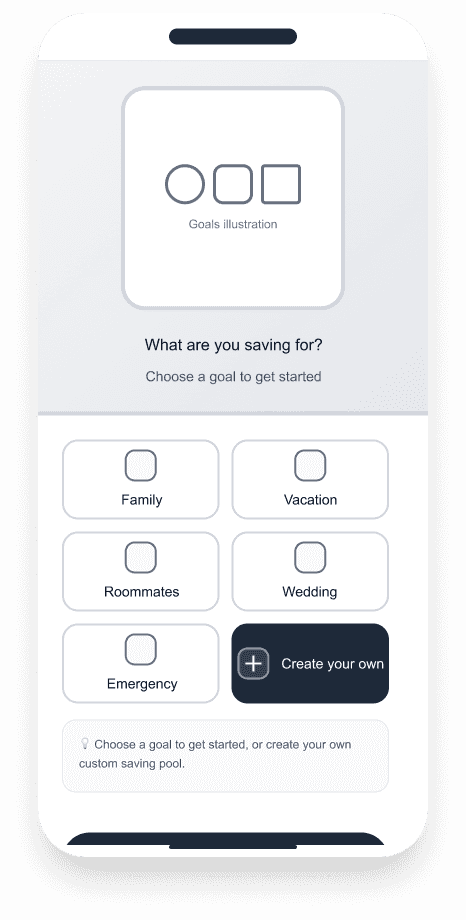

Saving goals screen options

Option 1- Grid

Option 2- Carousel

Option 3- List

Option 4- Segments

Option 5- Split

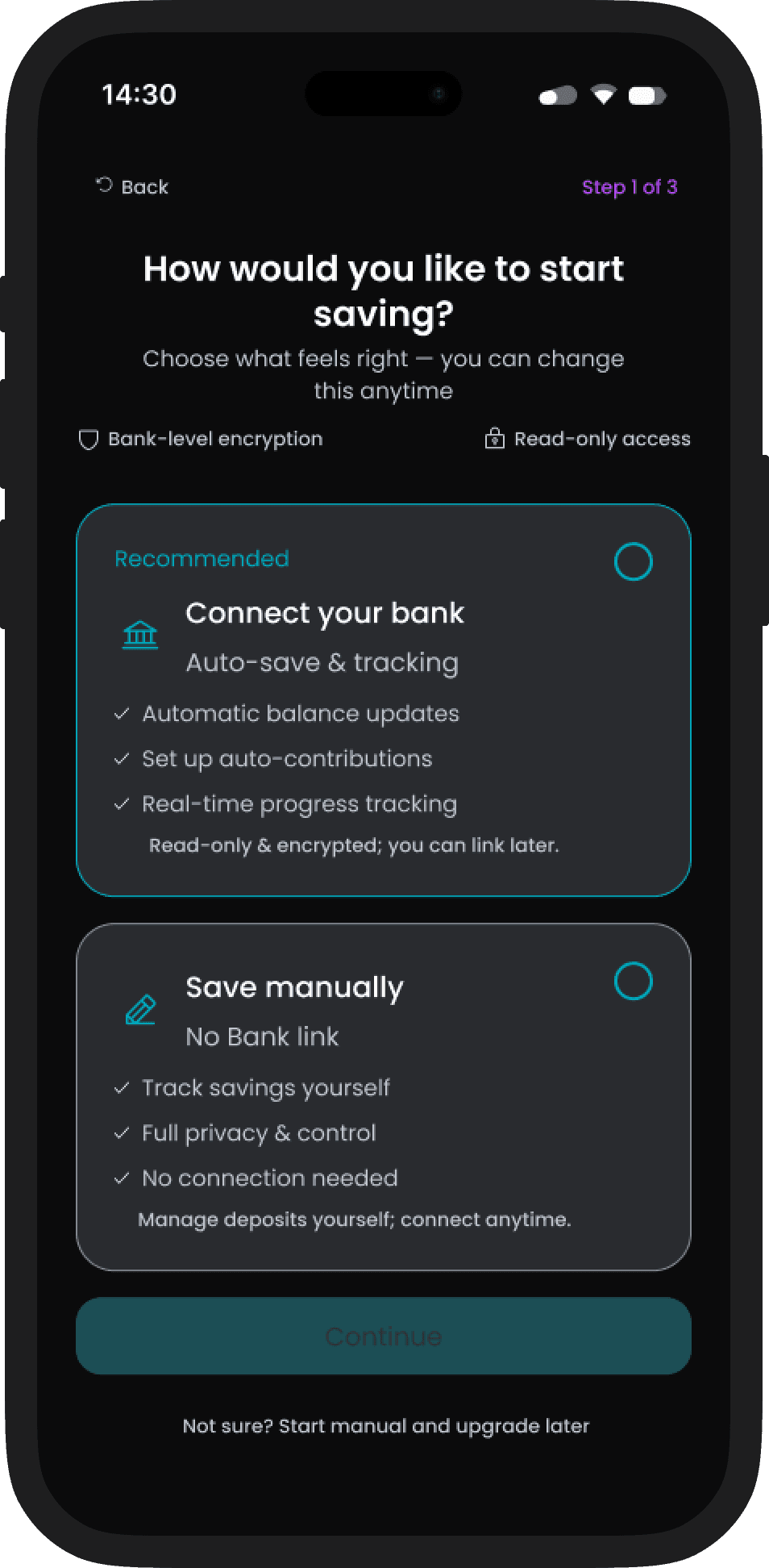

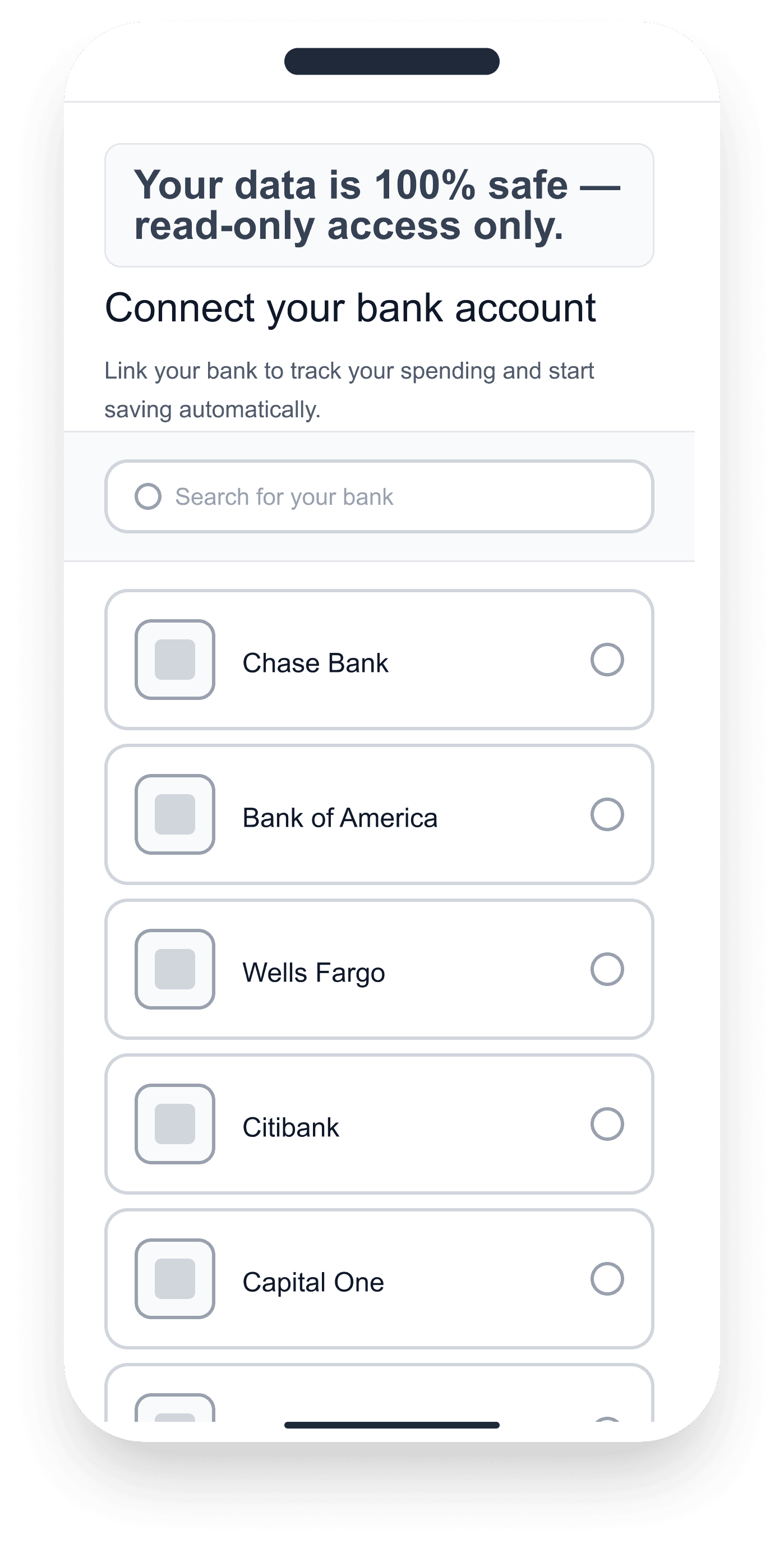

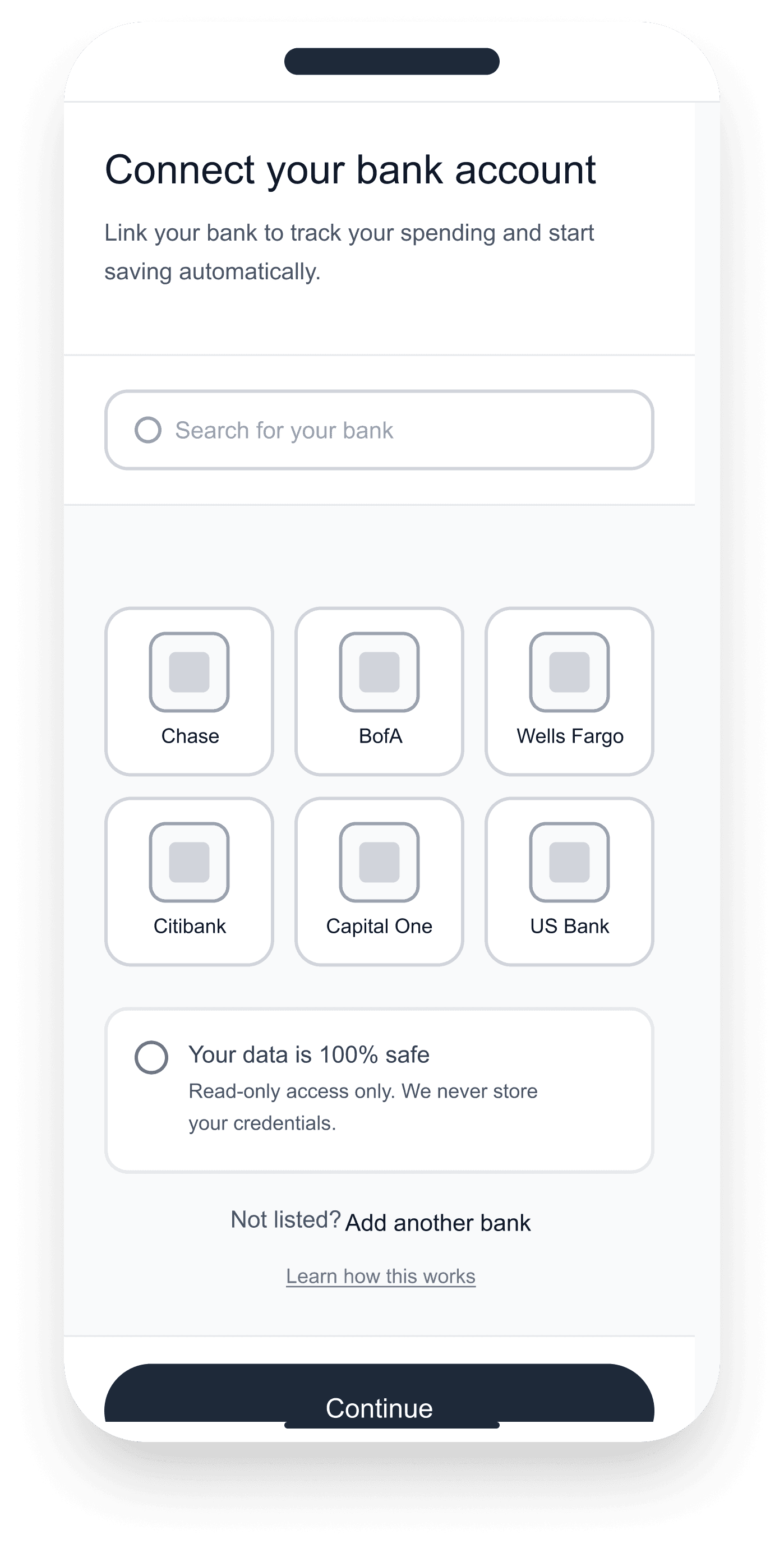

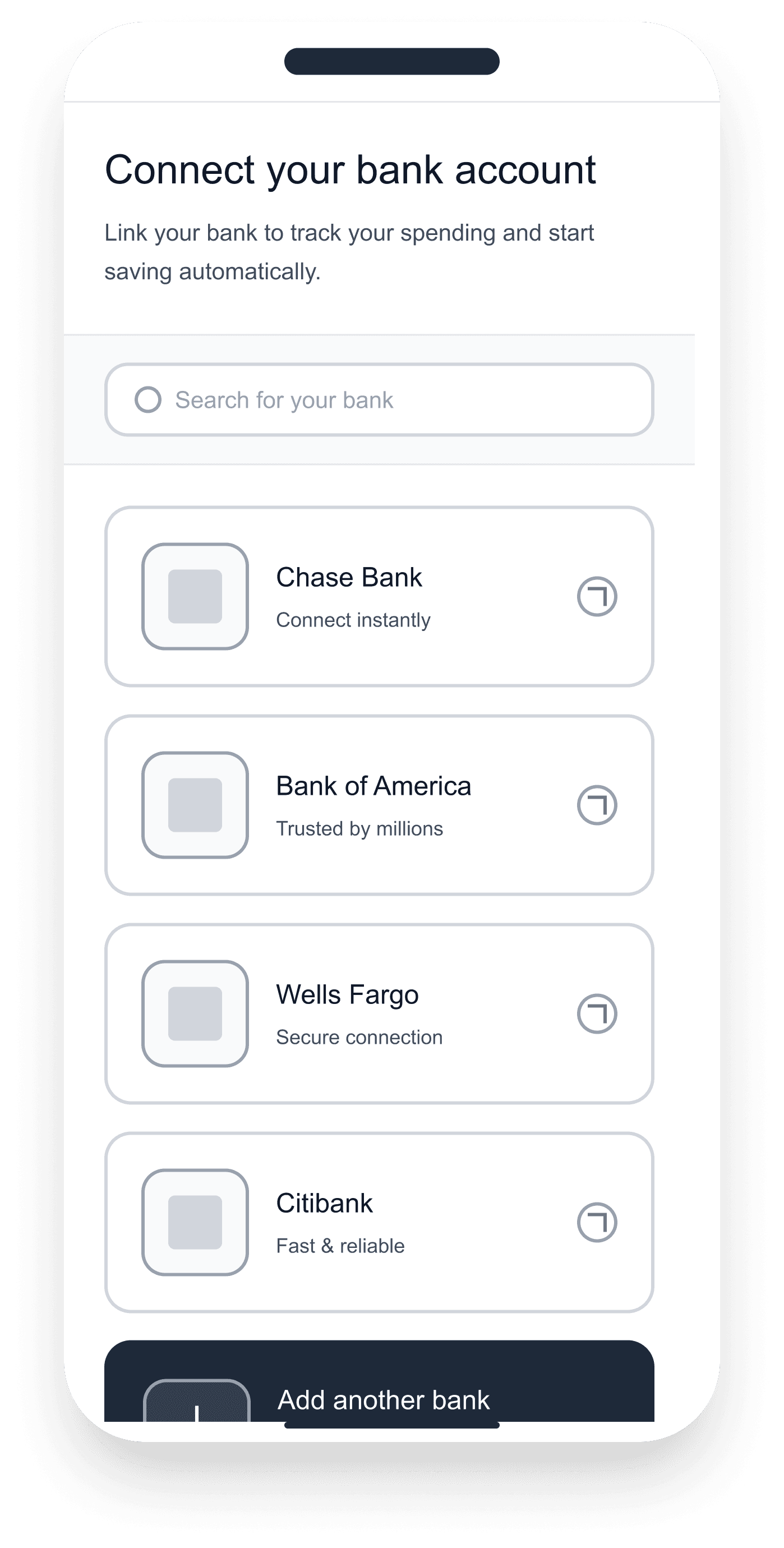

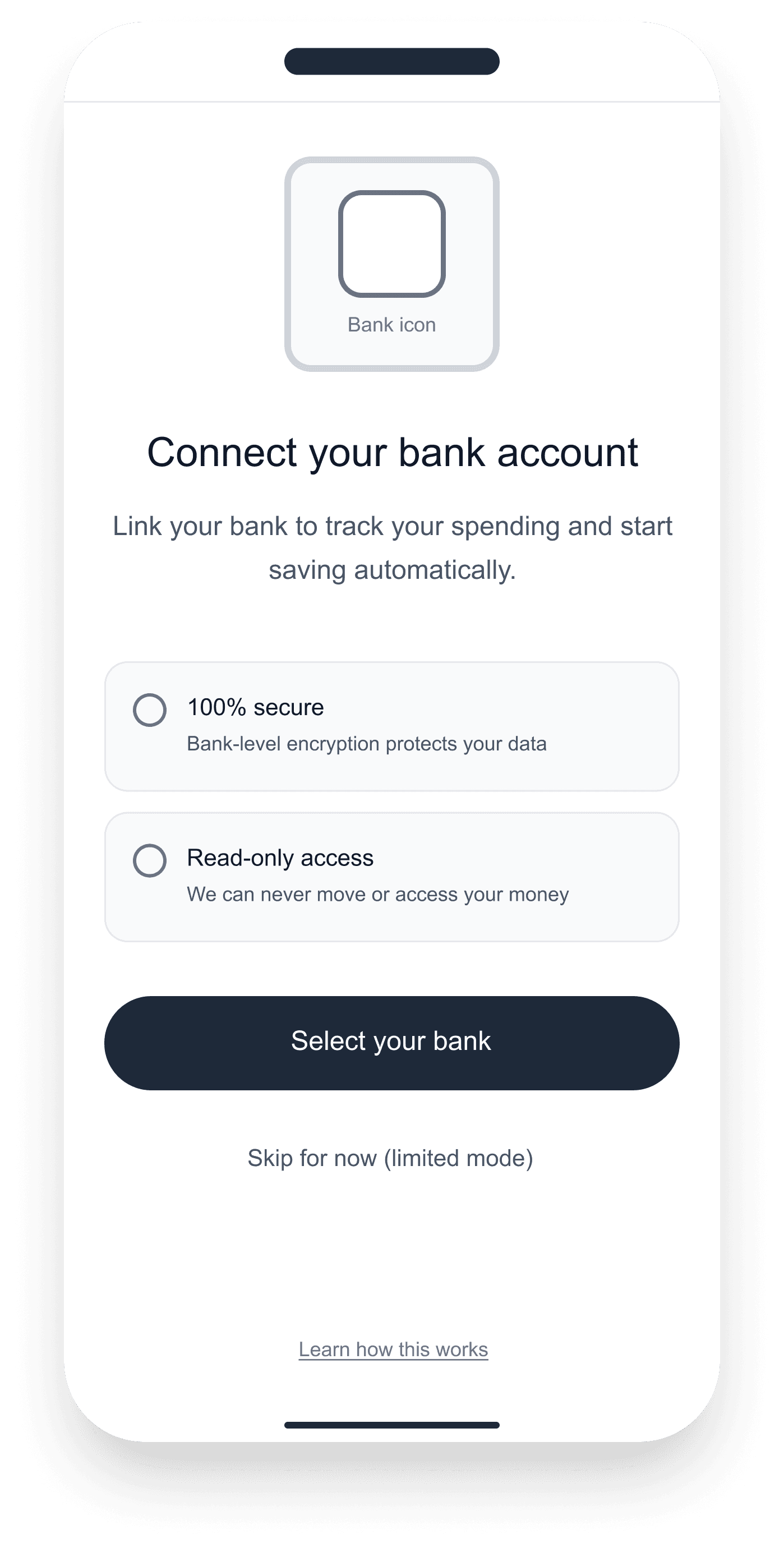

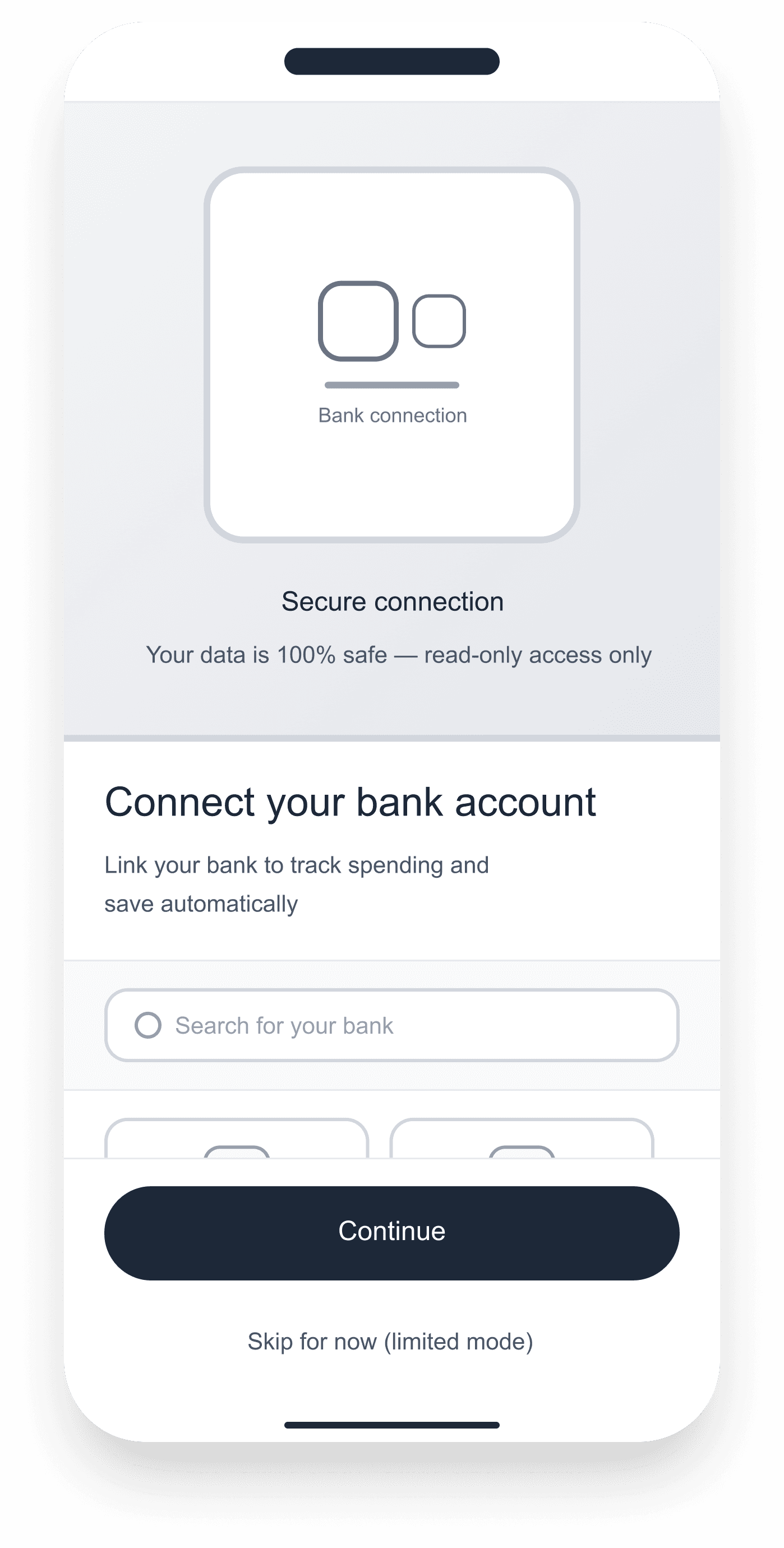

Connect Bank screen options

Option 4- Modal

Design Decisions & Trade-off

Key screens and component variants were explored using Figma Make as an active design partner. Generating layout alternatives, testing edge cases, and stress-testing the contribution flow before committing to final decisions.

One Mental Model

Pool is built around one idea: define a target, track contributions, see where things stand. Whether saving alone or with a group, the structure is identical — one goal, one pool, one shared view of progress. This single mental model drives every screen in the product.

System Design

The system is built around a single contribution model, transparent, role-based, and immutable. Four layers govern how money moves, who sees what, and how the interface responds automatically.

1 - Contribution rules

Each pool defines its own input method and split logic, manual or bank-synced.

2 - Goal tracking

Progress is calculated automatically from validated transactions, no manual updates needed.

3 - Member roles

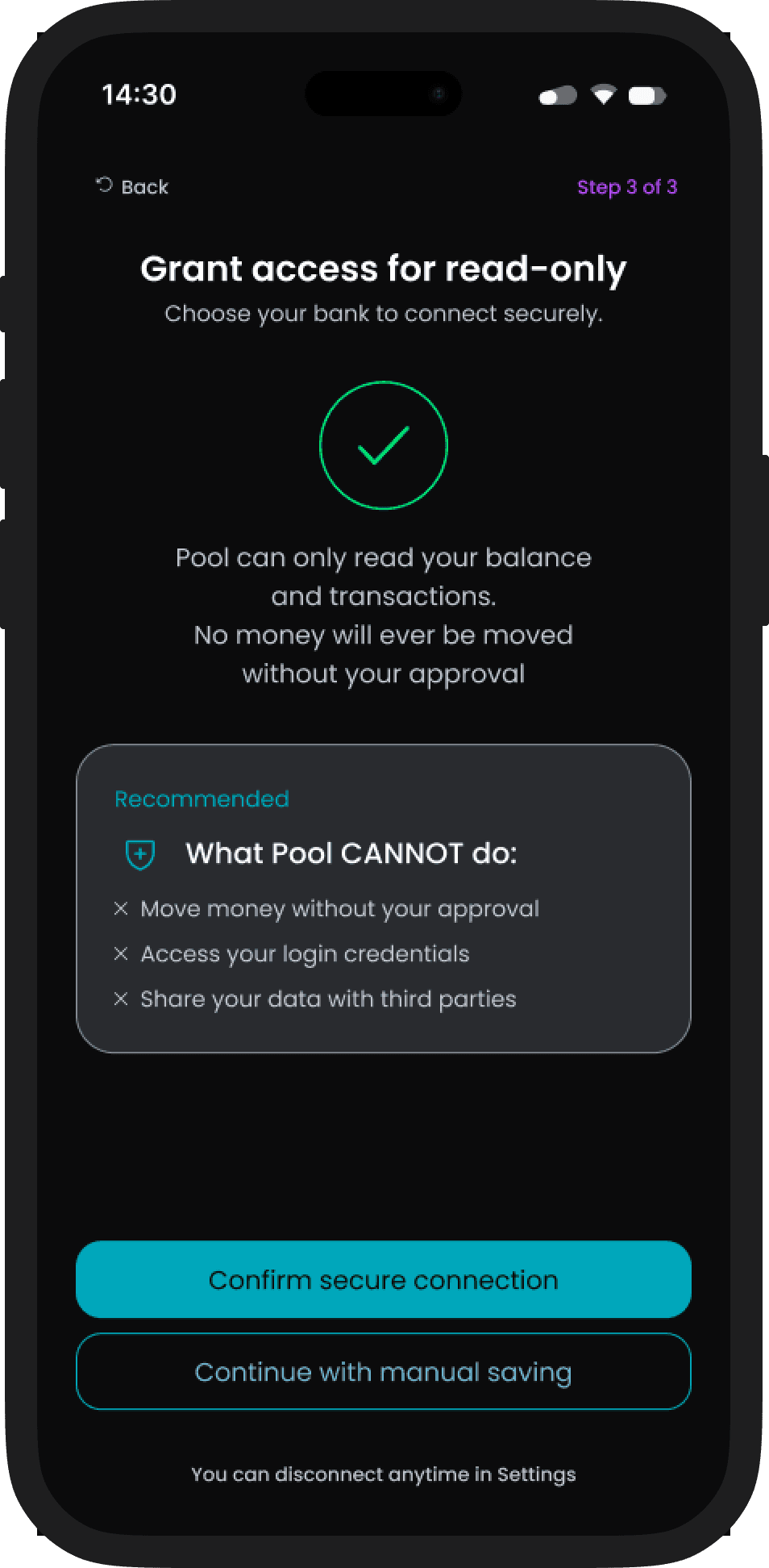

Visibility and permission rules are set at pool creation, no one can move money without an explicit action.

4 - Transparency layer

Every member sees a shared live view of progress and individual inputs, updated on every validated transaction.

The diagram below maps how these four layers translate into the token architecture. Foundation tokens define the grid and spacing rules. Surface and action tokens govern how contribution states appear. Status tokens trigger automatic responses when a payment is late or a goal is reached. Real-time goal tracking sits at the top, always visible, always current.

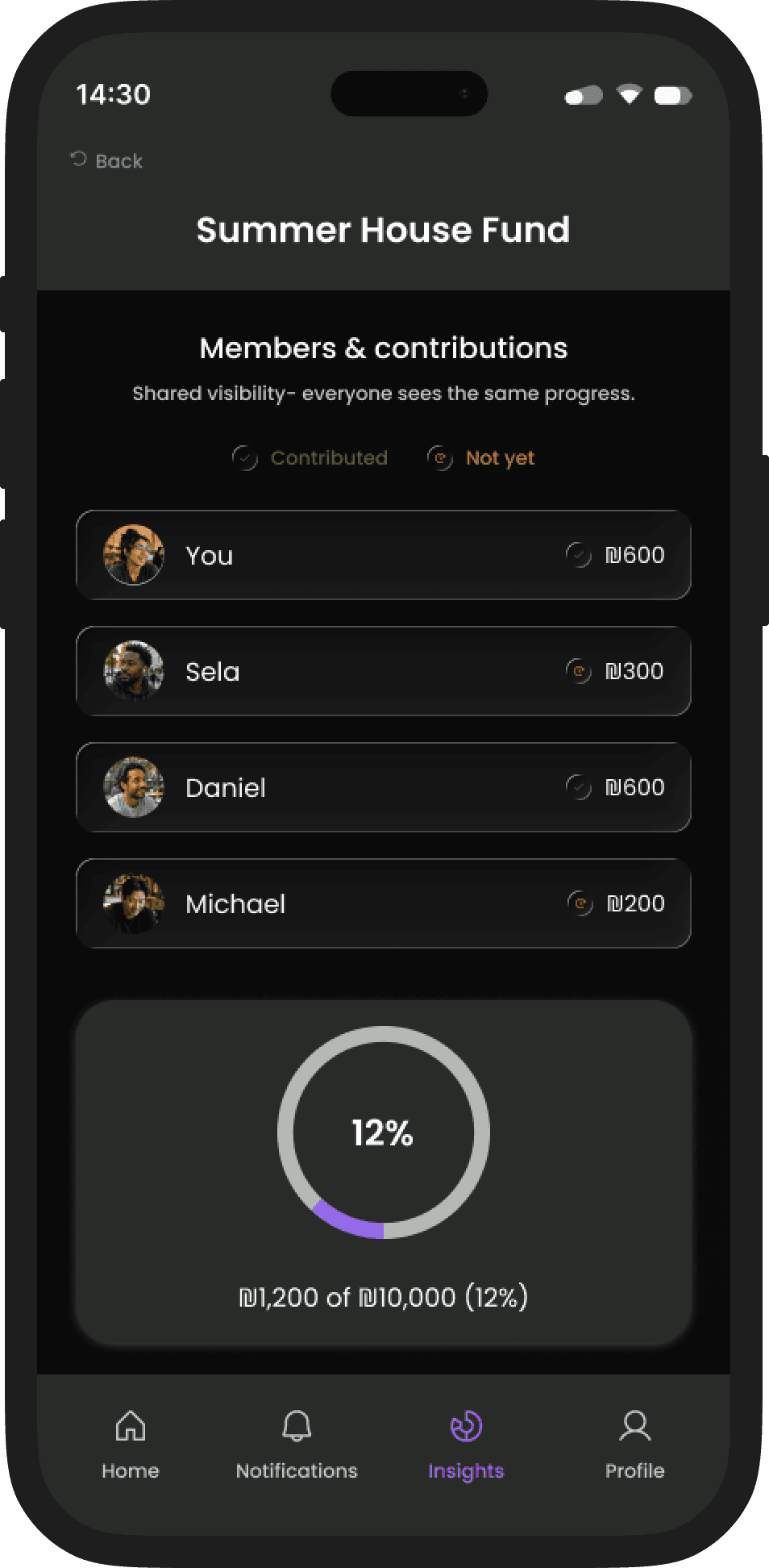

Key Screens

Core user flows demonstrating contribution clarity and shared visibility.

Transparency

Group accountability

Passive visibility

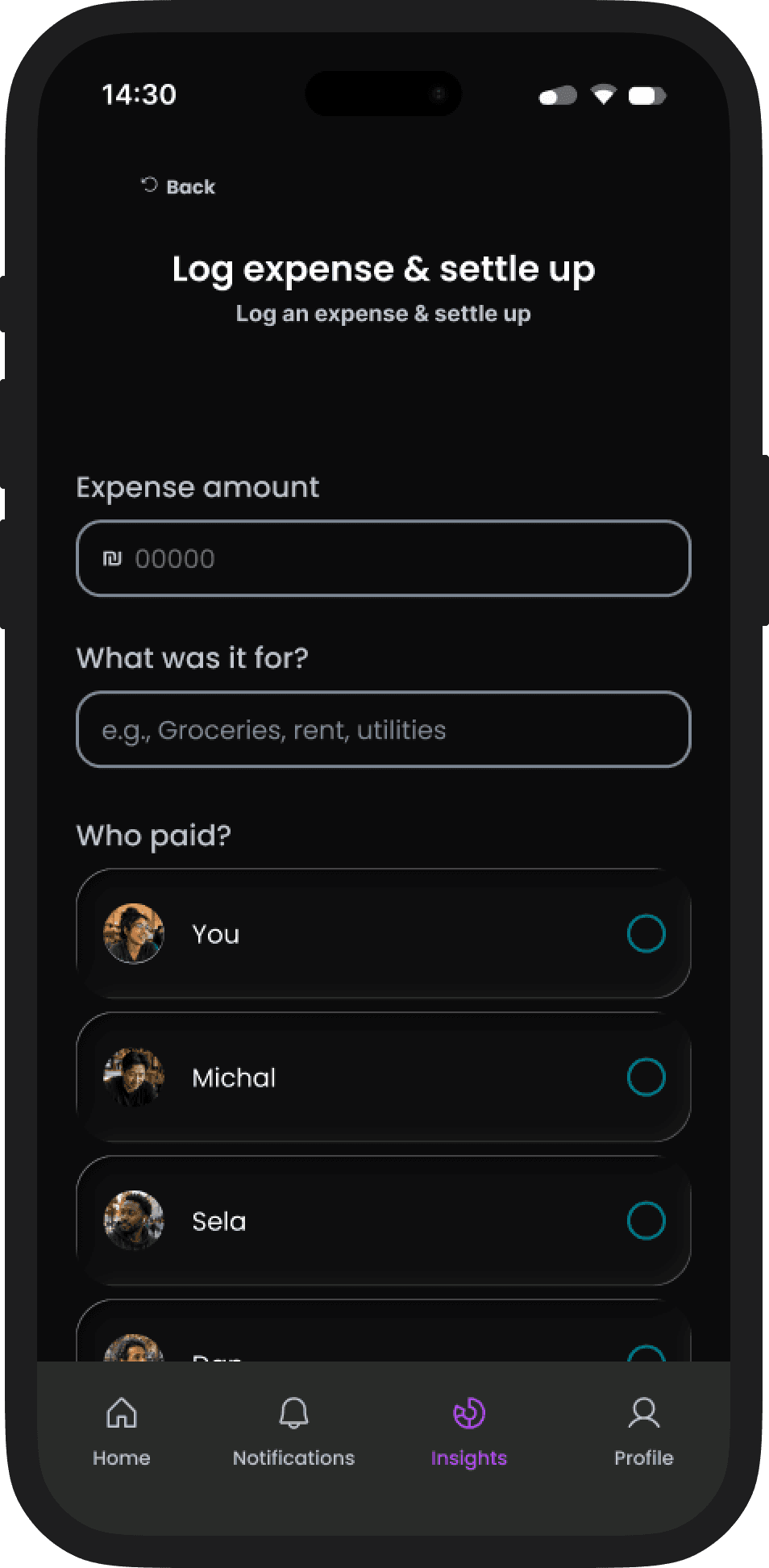

Contribution Flow

Flexible input

Read-only bank sync

Trust by design

Start manual, connect later, read-only bank sync is optional

Progress is calculated the same way regardless of input method

Expense logging

Settle up

No social friction

Zchut-AI | AI-Powered Civic Rights Platform

Designed a state-driven AI assistant that helps Israeli citizens discover and manage their social rights, with a focus on accessibility, transparency, and trust.

Role: Product Design & System Architecture (Independent Project)

Deliverables: Mobile app · Web dashboard · AI interaction system

Focus: AI transparency · Accessibility · Institutional trust

Bureaucracy wasn't designed for everyone

Israel's government services are fragmented, jargon-heavy, and increasingly digital-first. For two large population groups - seniors and new immigrants - this isn't inconvenient. It's exclusionary.

Isaac, 72 — Retired engineer, Tel Aviv

"I just want to know what I'm entitled to without feeling like I need a law degree to use an app."

Spends hours searching for health benefits he may already qualify for

Complex navigation and bureaucratic language trigger cognitive overload

RESEARCH INSIGHT

Our initial assumption: users need more information.

What we found: they need interpretation and confidence to act.

The information exists on platforms like Kol Zchut. The barrier is linguistic complexity and the anxiety of acting on something you don't fully understand.

One platform. Two interaction models.

Different tasks require different levels of AI involvement. The system separates deterministic processes from open-ended interpretation and routes each to the appropriate interaction model.

Guided Flow

Structured, step-by-step

Used for: eligibility checks, document submission, deadline tracking

Deterministic, clear inputs, clear outputs

Isaac: "Am I eligible for home-care benefit?" → structured checklist

Conversational AI

Natural language input

Used for: rights inquiry, document interpretation, escalation

Interpretive, ambiguous inputs, confidence-tiered outputs

Elena: scans tax letter → AI summarizes, flags urgency

Both models feed into the same underlying rights database. The interaction layer adapts; the data layer doesn't change.

The AI knows what it doesn't know

In a civic context, an AI that presents uncertain information with false confidence causes real harm - missed deadlines, wrong claims, financial loss. The system uses a three-state confidence model to make uncertainty visible before the user acts.

state

When it triggers

What the user sees

What the system does

Confident

Query is in-scope, high confidence score

Full answer + source tag

Logs interaction

Partial

Low confidence or edge-of-scope

Answer + "Verify with an advisor" warning

Flags for review queue

Escalate

Out-of-scope or critical legal domain

This requires professional review - no AI answer shown

Routes to advisor with full context

CONCEPT PROOF

Partial response detected. User sees inline caution state. Advisor queue entry created with full query context.

Escalation is a designed transition, not a fallback. The user sees the boundary before they hit it.

Design Decisions & Trade-offs

DECISION

Guided flow vs. open conversation

Structured flows reduce cognitive load for deterministic tasks. Conversational AI handles ambiguity. Separating the two prevents the system from feeling unpredictable.

Trade-off

Transparency vs. simplicity

Showing confidence states adds cognitive overhead. But hiding uncertainty in a civic context causes more harm than complexity. We chose to show the seams.

Trade-off

Escalation as a feature, not a fallback

Routing to a human advisor could feel like failure. We reframed it as a designed transition: the user sees the boundary before they hit it.

CONCEPT PROOF

Partial response detected. User sees inline caution state. Advisor queue entry created with full query context.

AI Data Flow & Extraction

To minimize cognitive load, the system acts as a "translator" between human natural language and complex bureaucratic requirements. Instead of forcing users to navigate through tedious forms, an LLM engine processes unstructured input—such as voice recordings or free text—extracts relevant entities (dates, names, statuses), and maps them directly into structured form fields. This architecture preserves the simplicity of a conversation while delivering the precision of a structured legal document.

Unstructured Input

Voice recordings or free text input from the user.

LLM Processing

NLP identifies intent and extracts key data entities.

Structured Output

Data is automatically injected into standard form fields.

Key Screens

Each screen resolves a specific tension between accessibility and trust.

14:30

שפה

שלום יצחק

עודכנו עבורך זכויות וסטטוסים רלוונטיים

צפה בעדכונים

סרוק מסמך

ה-AI יפרש אותו עבורך

חיפוש קולי

העוזר הקולי זמין לשאלות

הזכויות שלך

מאושר

הנחה בחשמל לאזרח ותיק

עודכן לאחרונה 20/01

בטיפול

קצבת זקנה (אזרח ותיק)

עודכן לאחרונה 20/01

מאושר

סיוע בשכר דירה

עודכן לאחרונה 20/01

הגדרות

מסמכים

מעקב

חיפוש

דף הבית

Home Dashboard (Mobile)

What this proves: A senior user can understand their rights status, pending actions, and new entitlements at a glance without navigating menus.

Personalized rights summary surfaces proactively based on user profile, not search

Status indicators distinguish between 'available now,' 'pending,' and 'requires action' without legal jargon

Voice Assistant (Mobile)

What this proves: Voice input isn't a feature. It's an accessibility requirement for users who find typing in bureaucratic contexts intimidating.

Waveform UI provides real-time feedback that the system is listening and processing

Input is transcribed and confirmed before the AI responds, reducing anxiety about being misheard

14:30

שפה

חזור

עוזר קולי

האם מגיעה לי הנחה בארנונה..

סיים הקלטה

14:30

פלאש

חזור

מזהה מכתב מביטוח לאומי...

החזק יציב

המסמך ייסרק וינותח אוטומתית

צלם

העלה מהגלריה

Document Scanner / AI Chat (Mobile)

What this proves: A new immigrant can resolve white-envelope anxiety in under 60 seconds.

OCR scan feeds directly into AI interpretation — no manual re-typing

AI response includes urgency classification: 'routine update' vs. 'deadline detected'

AI Chat with Escalation (Desktop)

What this proves: When the AI reaches the edge of its competence, the handoff to a human is visible, designed, and context-preserving.

Escalation trigger is shown inline. The user understands why before they're redirected.

Advisor receives full conversation context. The user does not need to re-explain.

Benefits Dashboard with Deadlines (Desktop)

What this proves: The system's value isn't just answering questions. It's proactively surfacing entitlements users don't know to ask about.

Deadline tracking is visible and calendar-integrated, removing the fear of missing a critical date.

Progress indicators show where each benefit claim stands in the submission process

Outcome

Zchut-AI demonstrates that designing for extreme users - those most excluded by existing systems - produces a better product for everyone. The dual interaction model, confidence-tiered AI, and escalation system aren't accessibility features bolted on. They're the core architecture.

The project also proves a broader design thesis: AI in high-stakes civic contexts requires a trust layer, not just a response layer.

What I'd Measure

© 2026 Guy Bar-Sinai